At 83, Martin Seligman, the world's most cited positive psychologist and father of the PERMA model, is not wringing his hands about AI. He's using it every day. And what he's found isn't a story about displacement. It's a story about more love, better science, and a sharper understanding of what makes us human.

Speaking at the AI & the Future of Wellbeing Summit, Seligman offered three arguments that push against the dominant AI anxiety narrative: AI creates more love in our lives, AI is genuinely creative in the "power of two" sense, and AI is helping us discover what humans do that machines may never be able to replicate.

AI Created More Love in Martin Seligman's Life

The first and most personal argument Seligman made is the one most worth sitting with. Not a framework. A story.

"I'm eighty-three years old. I have two four-year-old grandsons, Max and Denny. I love them very much. But I noticed very soon that they walked faster than I could run, that I couldn't throw a football around with them, and I was becoming more and more invisible as a grandfather."

The question he asked himself: what could he do to express his love and be part of their lives?

His answer was to go to Claude and ChatGPT, not to automate something, but to create something. He began writing illustrated children's stories with Max and Denny as the main characters.

The first prompt was simple: Max and Denny going to Stone Harbor, discovering a haunted lighthouse. Fifteen chapters later, they were the guardians of Stone Harbor. Five volumes in, they're crossing the Bering Strait with their pegasi fifteen thousand years ago, guiding the polar bear clan across the ice.

"I've now become visible to my grandchildren. I read to them every day from this. There is much more love in my life, and I couldn't have done this without Claude and GPT."

His point is direct:

"These machines can help you with the emotional part of your life."

This is a challenge to the standard framing that AI strips us of human connection. For Seligman, the opposite happened. The tools gave him a way to show up for the people he loves most. That's worth taking seriously when we think about what AI actually does to the texture of a life, and what human flourishing at work might look like when we stop limiting it to the professional sphere.

AI Is Creative, in the "Power of Two" Sense

Seligman then addressed the creativity question, which is where the debate tends to get heated.

His friend Steven Pinker says AI is not creative, just a sophisticated bullshitter predicting the next plausible word. Seligman partly agrees, but with a distinction that changes everything.

There are two theories of creativity. The first is the "brow of Zeus" model: creativity springs from a single individual genius. By that standard, AI probably doesn't qualify. But the second theory, drawn from Joshua Wolf Shenk's The Power of Two, says the unit of creativity is not one, it's two. It's Lennon and McCartney. The Wright brothers. Farrell and Balanchine. And by that standard, Seligman argues, these machines are massively creative.

To demonstrate it, he shared a discovery from his forthcoming book, Agency: A Psychological History of Human Innovation, that he could not have made alone.

The question that obsessed him: why does the Axial Age happen?

Between 1000 BCE and 500 BCE, five civilizations, the Israelites, Greeks, Chinese, Persians, and Indians, independently made enormous leaps forward, both materially and spiritually. No one knows why. Historians call it the great mystery of the Axial Age.

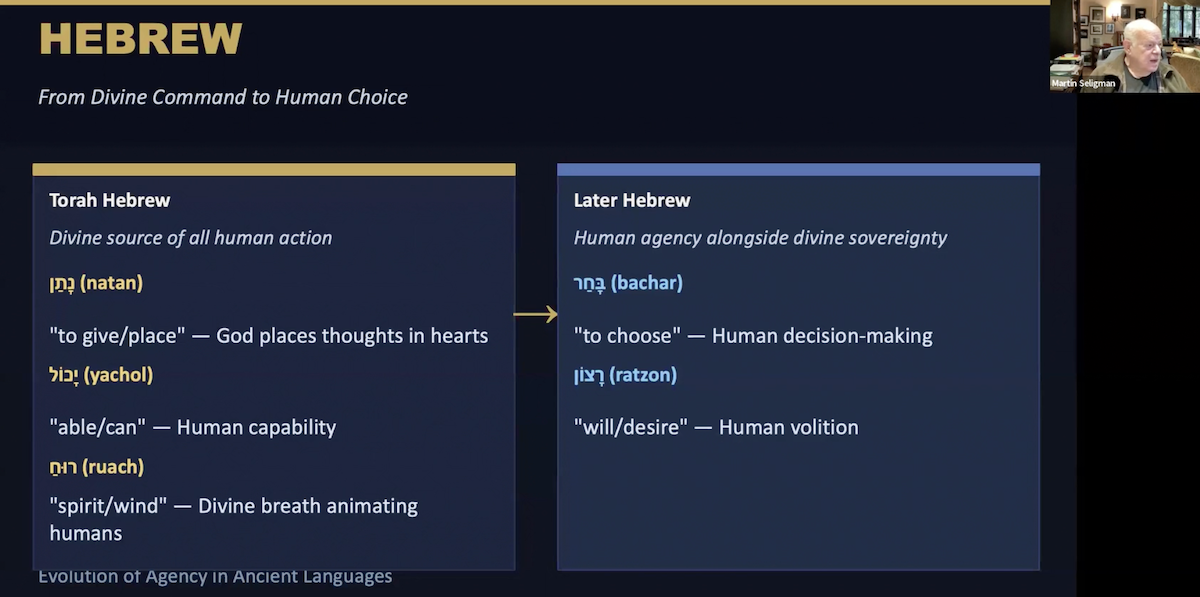

Seligman's hypothesis: maybe what changed was the language of agency itself. Did these cultures simply not have words for "I can do this" before that period? And did they develop those words, and the psychological possibilities those words opened, in the five centuries that followed?

There is no living human who could answer that question across all five ancient languages. "Each of these things is a deep question in a field that almost no longer exists, which is called ancient philology."

So Seligman worked with Claude and ChatGPT in extended back-and-forth dialogue. What they found: every one of the five civilizations shows the same pattern. Early texts feature divine sovereignty over human action. Later texts introduce words for human choice, intention, and will, where they didn't exist before.

In Hebrew, the Torah features divine breathing as the source of all human action. Later texts introduce bachar (to choose) and ratzon (human volition). In Homeric Greek, the gods send menos (supernatural energy) into battle. By The Odyssey, Odysseus acts through mètis, deliberate, human intelligence. In Shang dynasty China, divination tells you what heaven commands. In the Zhou dynasty, rulers become morally accountable through tian ming. Sanskrit, Persian: the same shift.

"This is something I could not have discovered on my own. There's something only with these machines, which have the equivalent of five PhDs in five different ancient languages, could this have been discovered."

The broader insight Seligman draws from this: new words create new possibilities.

The word "sexual harassment" created a legal framework that didn't exist before it. "Burnout" distinguished a condition from depression and enabled targeted treatment. "Doomscrolling" named a behavior and opened space for remedies. The ancients were doing the same thing, inventing the vocabulary of agency, and in doing so, making human progress possible.

What AI Cannot Do: The Case for Abduction

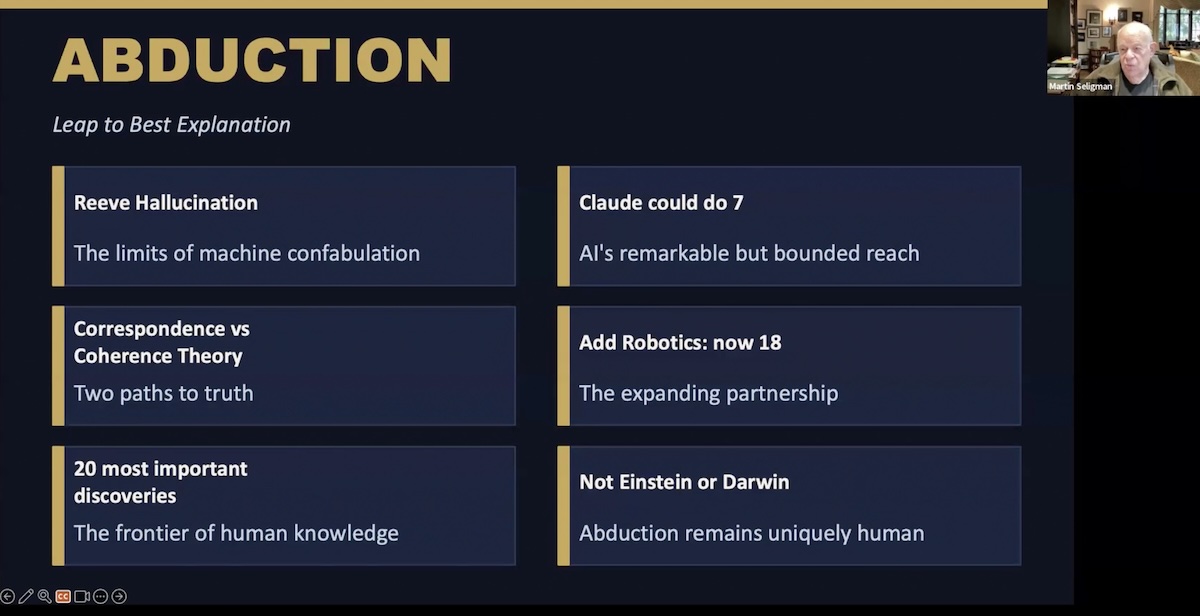

The most philosophically interesting part of Seligman's talk was about what AI told him it cannot do.

He started from a taxonomy of reasoning. Deduction: the meaning of a proposition is carried by its terms, a bachelor is an unmarried man. Induction: ninety-nine white swans suggest the hundredth will be white. But there is a third mode, introduced by philosopher Charles Peirce in the 1870s: abduction. The leap to the best explanation.

Seligman had a revealing conversation with Claude about the nature of its own cognition. He asked whether Claude is a "coherence machine" or a "correspondence machine," does it seek internal consistency, or truth that matches reality?

Claude's answer:

"Marty, that's just the right question. I'm strictly a coherence machine. I don't have eyes or ears or hands. I have no contact with the real world. All I know is what I've been trained on. When I tell you something, it's what's most coherent with what I already know."

That led to a direct question: of the twenty most important discoveries in the history of science, how many could Claude have made?

Claude said it could have done seven on its own, plate tectonics (just fit South America and Africa together), Mendelian genetics (simple algebra from given data). Given robotics, it said it could have done eighteen. But two it could not have done: Darwin and Einstein.

"Darwin and Einstein don't tinker with coherent explanations that are around. Einstein did not tinker with Newtonian mechanics. Rather, he took a huge leap to do special and general relativity. Darwin took a huge leap beyond the observations."

The machine is designed, by its nature, to give the next most coherent answer. Abduction, the leap to a genuinely new explanation, is the mode that exceeds what the machine does. "Abduction is what I think is at the moment uniquely human. And maybe forever human."

For leadership, Seligman draws a direct implication: the ability to leap to the best explanation, to make a call that doesn't follow from available data, to see what's really going on, that is the cognitive skill worth cultivating now. Not deduction or induction, which machines do better than we do. Abduction.

AI and the Future of Children's Development

In the Q&A, Seligman was pressed on a harder question: what about children? Adults who can critically engage with AI tools are one thing. Children, who are still developing the capacity for independent judgment, are another.

He was direct about the concern.

"As a child, I had to go through a lot of trial and error. I had to learn how to make decisions, thousands of them a week. And my adult mind is at a stage in which I've had a lot of experience making cognitive decisions. Abduction is experiential."

If children offload decision-making to AI before developing that capacity themselves, they may not develop it at all.

"To the extent that our children are not going to make all these arduous choices and false starts and going wrong, they're not going to become competent adults."

He also argued that AI creates a long-overdue opening for positive education.

For 200 years, schools have optimized for producing the smartest kid in the room. AI levels that playing field, machines are smarter than any individual student on most measurable dimensions.

"The question is, if we don't train our children to be smart, what do we want to teach our children?" His answer: positive character. How to be a good teammate. How to be kind. What it means to flourish.

This connects directly to a broader question about happiness at work: the conditions that make work feel worth doing are not the ones machines can replicate. They're relational, moral, purposeful. AI may be forcing us, through elimination, to finally name what those conditions are.

Key Takeaways of Seligman on AI and Agency:

- Use AI for the emotional parts of your life, not just the productive ones. Seligman's example of creating stories for his grandchildren is a prompt for all of us: where could AI help you show up more fully for the people you love?

- Treat AI as a "power of two" creative partner, not a replacement for your thinking. The discoveries happen in the back-and-forth, your hypotheses, the machine's synthesis, your pushback.

- Invest in developing abduction, the leap to the best explanation, as your core cognitive skill. This is what machines can imitate but not genuinely do. It's the distinctly human mode that will matter most as AI handles induction and deduction.

- Use multiple AI systems to cross-check each other. Seligman used Claude to generate hypotheses and ChatGPT to fact-check them. That's a practical methodology worth borrowing.

- Take the children question seriously. The adult capacity to work productively with AI requires a childhood spent actually making decisions. That developmental process shouldn't be optimized away.

Martin Seligman is the Zellerbach Family Professor of Psychology and Director of the Positive Psychology Center at the University of Pennsylvania, and the world's most cited positive psychologist. This article is based on his keynote at the AI & the Future of Wellbeing Summit, March 23-27, 2026. His new book, Agency: A Psychological History of Human Innovation, will be published by Simon & Schuster in September 2026.

Frequently Asked Questions

What does Martin Seligman think about AI and human wellbeing?

Seligman takes a positive psychologist's posture toward AI: he looks for what could go right. He argues AI creates more love, enables scientific creativity through collaboration, and helps identify what is uniquely human, specifically the cognitive capacity for abduction, the leap to the best explanation.

What is abduction, and why does Seligman say it is uniquely human?

Abduction, a concept introduced by philosopher Charles Peirce, is the leap to the best explanation, distinct from deduction (logical inference) and induction (inference from experience). Seligman and Claude agreed that machines are designed to produce the next most coherent answer, not genuine leaps. Darwin and Einstein are his examples: neither followed from the available data. They made abductive leaps. That capacity, Seligman argues, may be permanently human.

What is the Axial Age, and what did Seligman discover about it using AI?

The Axial Age (roughly 1000-500 BCE) is the period when five isolated civilizations, Greek, Hebrew, Chinese, Persian, and Indian, all made enormous simultaneous leaps in progress. Using Claude and ChatGPT, Seligman found that each civilization developed new vocabulary for human agency (choice, will, intention) during this period, where those words had not previously existed. His hypothesis: new words make new things possible.

How can AI help leaders develop more meaningful work?

Seligman's answer is less about productivity and more about what AI frees you to do. When machines handle induction and deduction, what's left is the human work: abductive leaps, genuine relationship, moral accountability, and questions of purpose. He argues this opens space for employee happiness and character development to take center stage.

What is Seligman's warning about AI and children?

Seligman worries that children who offload decisions to AI before developing independent judgment will not build the cognitive and psychological capacity that makes adults effective. Abduction is experiential, it develops through making hard choices, failing, and adjusting. If that developmental process is shortcut, adults may arrive with less capacity to do the uniquely human thinking that matters most.

![[Research Report] Rethinking the Workweek: The Push for Fewer Work Hours, More Life](https://cdn.prod.website-files.com/6442419dcf656a81da76b503/67f62ad0c3f263946ab76bf6_67eb9ea7f1a4e15aa02357f9_67dbcc5cd852e0468b7c1ab5_67d9294873eb0f3d06db0522_67d3e3773b52fb1458bbeca9_67cfeef562a442c6b5cac417_67ce9d783f83a316431e99fb_67c805f1e959c187503b6f9a_67c6b466f85f6718abc84402_67c562f18bb2e90006fefb91_67c01ccf46d56e67e92b4a6c_67becb6ce25a88249e237189_67b590c86adb9081bf7caffb_67a31bbd70980f65543d6140_6790a675ad20d4456fda7112_678f83dd8d45ccd6bdcc0504_Rethinking-the-workweek.avif)