Raising children in the age of AI has become one of the defining challenges for senior leaders and parents who understand both the scale of the coming disruption and how little the mainstream conversation actually helps.

The question isn't just about screen time or which coding camp to enroll in. It goes much deeper: How do you raise a child toward flourishing in a world where the traditional paths to meaningful work are being redrawn in real time?

Why This Moment Is Different

First off: why this question, and why now?

Every generation of parents has faced technological disruption. But the current shift has qualities that set it apart from previous transitions for three reasons:

The pace is unprecedented.

The World Economic Forum's Four Futures for Jobs report maps four scenarios for 2030 shaped by the intersection of AI capability and workforce readiness.

Even in the most optimistic scenario, "Supercharged Progress," many jobs disappear, new occupations emerge and scale up fast, and workers increasingly become agent orchestrators.

In the least optimistic, "The Age of Displacement," rapid tech advances outpace workers' reskilling, causing talent shortages, increased automation, unemployment and social division.

A child in primary school today will enter the workforce right in the middle of all four scenarios playing out simultaneously.

The cognitive stakes are new.

Unlike a calculator or a search engine, generative AI can write a student's essay, reason through their problems, and simulate friendship. As Harvard researchers and others have noted, previous technologies reshaped what humans did. AI, used without intentionality, can reshape who they become.

The anxiety is measurable.

A 2026 survey found that 72% of parents believe AI tools should be part of their child's education.

But the same survey documents deep anxiety about dependency, lost human connection, and whether "AI in education" means anything beyond faster homework. Most parents are not anti-AI. They are pro-thoughtfulness.

The Jobs Crisis That's Already Here

To understand what's at stake for today's children, start with what's happening to their older peers right now.

Entry-level job postings in the U.S. plunged 35% from January 2023 to June 2025, according to labor research firm Revelio Labs. AI was a key contributor, especially for roles in data engineering, software development, customer service, compliance, and financial analysis.

The Cengage Group Graduate Employability Report found that only 30% of 2025 college graduates secured entry-level jobs in their chosen fields. Recent graduate unemployment hit 5.7% in Q4 2025, worse than at any point during the 2008 financial crisis.

In the UK, tech graduate roles fell by 46% in 2024, with projections for a further 53% drop by 2026. In India, among 400 engineering graduates at one leading institute, fewer than 25% secured job offers by spring 2026.

The mechanism behind this collapse is structural, not cyclical.

The traditional "deal" of entry-level work, trading rote labor for mentorship, is dead. The "learning curve" is being automated, leaving early-career professionals stranded between AI agents and senior workers.

"Early-career jobs are the training wheels for a career," said Alison Lands, Vice President of employer mobilization at Jobs for the Future. "Data suggests that AI is disrupting the traditional career ladder as we know it."

The WEF's "no regrets" conclusion: Across all four futures scenarios, the Forum stresses that the choices made by businesses and policymakers in the next few years will play a decisive role in which scenario we land in.

That applies to parents too. The decisions made in the next few years, about what skills, what character traits, and what identity foundations to invest in, will determine whether children inherit the "Co-Pilot Economy" or "The Age of Displacement", no matter which jobs AI will replace.

The Anxious Generation Problem Gets More Complicated

Before AI became a workplace issue, smartphones had already done significant damage to children's development. Jonathan Haidt's research in The Anxious Generation documented the scale.

"We have over-protected children in the real world and under-protected them online. They began social distancing as soon as they got smartphones." – Jonathan Heidt

Haidt's four proposed norms: no smartphones before 14, no social media before 16, phone-free schools, and more unsupervised play in the real world, have gained significant policy traction.

But the AI layer now adds a second dimension.

Haidt has warned that AI is moving us "from the idea that AI enables you to know everything" to the idea that "AI allows you to do everything." AI agents "are going to give us omnipotence," he said. "And that would be horrible for children."

Researchers building on Haidt's work have raised concerns about "intellectual deskilling" in younger generations.

As generative AI tools like ChatGPT gain popularity, children risk becoming overly reliant on automated solutions, potentially undermining metacognition and critical thinking. The instant gratification provided by AI-generated responses can discourage the development of patience and perseverance.

Children's social interactions with AI may affect their approach to interpersonal interactions. Children's developing understanding of the world may make them particularly susceptible to attributing human-like properties to AI, undermining their expectations of these technologies.

A recent UC Irvine study found that children between three and six already believed that smart devices have thoughts and feelings. This is the baseline generation that will grow up with AI companions and AI tutors.

What Skills Actually Survive

The most important research question for parents is: what capabilities should we be building in children that AI cannot replicate?

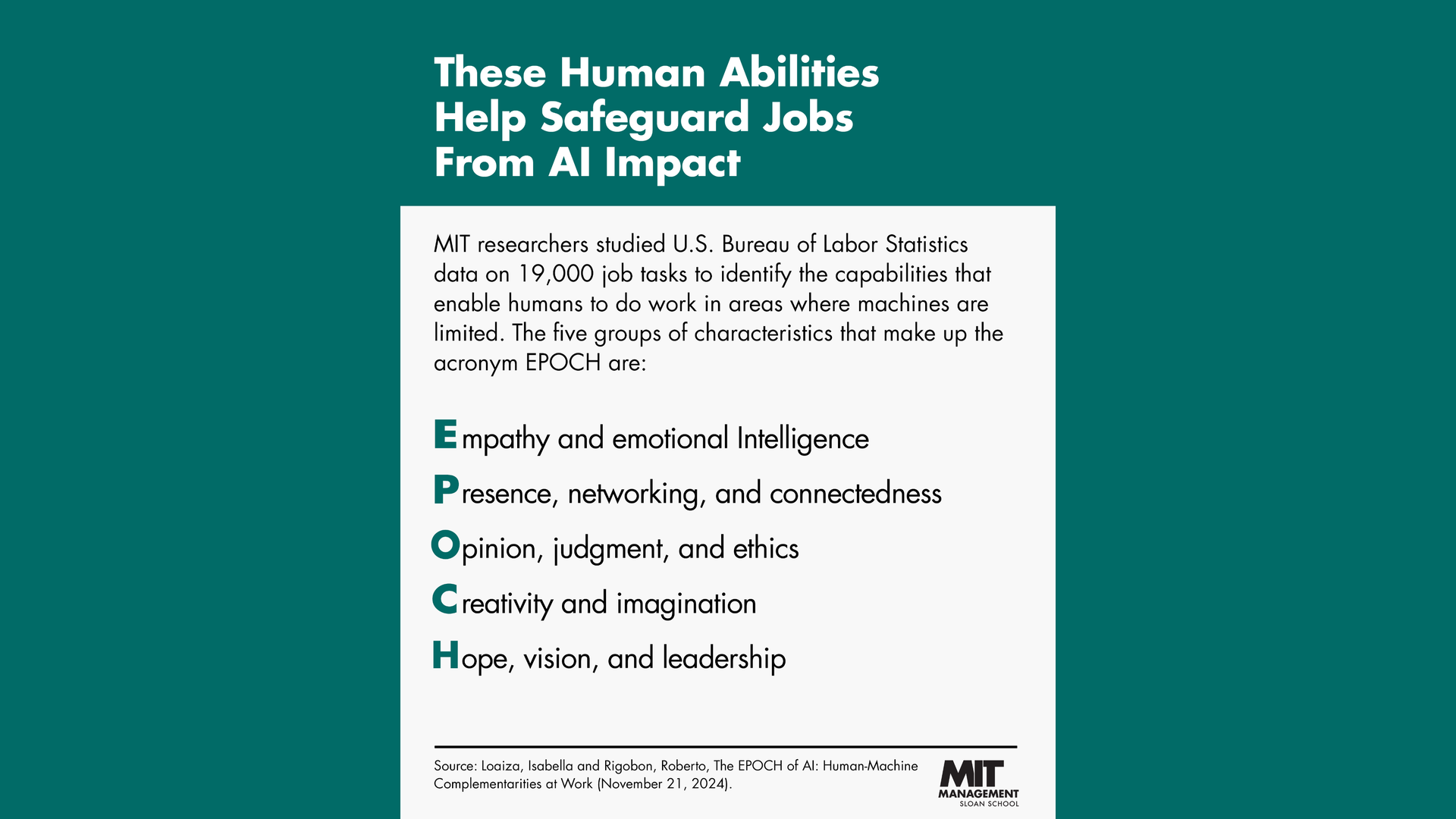

MIT Sloan researchers have developed a framework called EPOCH, one of the most rigorous answers to this question. EPOCH outlines five uniquely human capability groups: Empathy, Presence, Opinion/Judgment, Creativity, and Hope, that AI struggles to replicate.

Critically, the MIT researchers reject the "soft skills" framing. "We deliberately don't call these 'soft' skills," said Professor Rigobon. "A 'hard' skill, like solving a math problem, is comparatively easy to teach. It is much harder to teach a person these critical human skills, such as hope, empathy, and creativity."

McKinsey's analysis supports this. As AI absorbs more chores like sifting information, organizing data and drafting basic content, workers will have to lean more heavily on the capabilities machines do not yet offer: judgment, relationship-building, critical thinking and empathy.

The World Economic Forum frames it as an agency question:

"AI has accelerated a reality that education has been slow to confront: information no longer differentiates people, agency does. The abilities to ask better questions, navigate ambiguity, empathize and turn ideas into action are not extracurricular; they are the defining competencies."

For parents, the practical implication is direct.

Children need experiences that build these capabilities. Experiences that develop judgment (making real decisions with real stakes), presence (being in a room, reading people, leading teams), creativity (making something new, not just consuming or remixing), empathy (understanding others' interior worlds), and hope (maintaining motivation and direction when answers are not clear).

None of these are built primarily in a classroom.

What Schools Are (and Aren't) Doing

The Honest Assessment

Most school systems were designed for an industrial economy: show up, absorb a fixed body of knowledge, get a credential, get a job. As educator Aran Levasseur writes:

"What makes a human life meaningful when machines can replicate or even surpass many of our abilities? This is not a question students will face someday. They face it now."

The gap between what's needed and what's being delivered is wide. The WEF's Future of Jobs Report 2025 found that AI will displace 92 million jobs while creating 170 million new ones, but the transition depends entirely on educational systems that are currently moving at a fraction of the speed required.

The global AI-in-education market is valued at roughly $7 billion in 2025, and projected annual growth for that market is more than 36% through the next decade. But market size and genuine educational transformation are different things. Most AI integration in schools today consists of teachers using AI to create lessons faster, not fundamentally rethinking what students need to learn.

A growing number of school districts are emphasizing AI literacy, and the conversation has shifted. As Richard Culatta of ISTE+ASCD put it:

"What we really need to do is stop spending so much time teaching AI literacy and we need to start teaching how students should be using AI for learning."

The gap between knowing AI exists and knowing how to learn with it, while preserving the deep human capabilities it can crowd out, remains largely unaddressed.

Where Schools Are Falling Short

Several systemic failures stand out in the current research:

Rewarding the wrong things. Standard curricula still reward memorization, compliance, and speed; exactly the qualities where AI already wins. Assessment systems are built around what machines now do cheaply.

Equity of preparation. The most innovative approaches to AI-era education are expensive private schools. The gap between how children of tech leaders are being educated and how everyone else's children are educated is widening, not narrowing.

Teachers unprepared. Fewer than one in three teachers report moderate confidence with classroom AI, while 45% feel only slight or no confidence at all. You cannot build the next generation of AI-capable, deeply human learners without adults in the room who understand the landscape.

No system for identity. Schools teach subjects. Almost none have curricula designed around what Berkeley's Greater Good Science Center calls the foundational question of this era: what does it mean to live a meaningful human life when machines can match your outputs?

Bright Spots Worth Watching

There are few schools and organizations that are making more serious work of the challenge to raise AI-resilient future leaders:

Alpha School

Alpha School (Austin, Texas) has built the most discussed alternative model currently operating at scale.

Their "2 Hour Learning" model uses AI to provide personalized 1:1 instruction, completing core academics in the morning while afternoons focus on life skills, passion projects, and workshops. As Principal Joe Liemandt said on The Knowledge Project, it's about "building an educational system that's going to be ready for when AI comes, which means a ground-up rewrite of the skills kids need and how should they spend the day."

Third-party MAP Growth tests show students perform in the top 1-2% nationally, achieving roughly 2.3x annual growth compared with peers. But the model draws both intense interest and serious criticism.

Alpha asserts that students progress more quickly than peers, but these claims rely on internal analyses and have not been independently verified. Stanford education expert Thomas Dee has raised concerns that comparing private school students to the broader public school population on standardized tests is not a clean comparison. Tuition runs from $10,000 to $75,000 annually, which raises significant equity questions.

The model has gained support from billionaire Bill Ackman, who called it "a truly breakthrough innovation," and recently received a visit from U.S. Education Secretary Linda McMahon. What Alpha represents, even to skeptics, is proof that the six-hour industrial school day is not a law of nature.

Synthesis

Synthesis (formerly Ad Astra at SpaceX) takes a different approach. Founded when Elon Musk asked educator Josh Dahn to start an experimental school at SpaceX in 2014, the goal was to develop students who are "enthralled by complexity and solving for the unknown."

Now a global platform, Synthesis uses team-based simulation games to build strategic thinking, collaborative problem-solving, and leadership. The focus is explicitly on capabilities that cannot be automated: decision-making under uncertainty, ethical reasoning, and working with others on hard problems.

Synthesis is now partnered with the state of Oklahoma and reaches students across 95% of U.S. K-8 schools.

Starter School

Starter School takes a different angle again. It is a part-time online business school for teens aged 12-20 that replaces traditional subjects with industry-based "co-ops": real-world projects in app design, automation, real estate, financial markets, content creation, game design, and 20+ other fields.

Students use the actual tools companies use daily (Figma, Zapier, ChatGPT, Stripe, Canva) and build a portfolio rather than earning a certificate. Critically, students can earn cash for completing challenges.

At $29/month, it is accessible to mainstream families in a way that Alpha and Synthesis are not.

With 4,500+ teens enrolled and over $193,000 earned by students, its core philosophy is worth naming: "industries, not subjects." That phrase is a direct critique of the industrial school model, and it reflects what parents of senior leaders who care about career readiness are actually asking for.

Khan Academy, Finland, and Google

Khan Academy's Khanmigo represents the most accessible mainstream example: an AI tutor that provides personalized guidance without replacing the human need to think through problems. At $4 per month, it represents a model that could scale.

Finland's national curriculum remains a global benchmark. Finnish schools prioritize deep thinking over rote learning, give students significant autonomy, and build in substantial collaborative problem-solving. AI has not changed that foundation, but it does present Finland with the question of whether its philosophy can survive intact in an era of instant AI answers.

Google's AI for Education Accelerator has brought AI training to more than 400 higher education institutions across all 50 U.S. states, offering students practical AI skills with credit recommendations. The program signals one direction for mainstream integration.

The Silicon Valley Paradox

One of the most instructive patterns in this space is what might be called the Silicon Valley Paradox: the people building AI-powered consumer products are among the most restrictive about their children's technology exposure.

The Waldorf School of the Peninsula in Silicon Valley, which famously bans screens and teaches children of eBay, Apple, Uber, and Google employees through hands-on, physical, creative work, continues to have one of the longest waitlists in the region.

Silicon Valley parents seem to grasp the addictive powers of smartphones and tablets more than the general public does. Whether the same will hold true for generative AI remains to be seen. But the pattern suggests that proximity to the technology increases caution, not enthusiasm, about early childhood exposure.

The Identity Question Nobody Is Asking

Below all the skills frameworks and school models lies a question that rarely makes it into the education conversation.

As AI Cold War author Robert Baginnis framed it: "The deeper work is forming a child whose identity does not depend on a job title that AI may eventually erode."

This is the question most directly connected to human flourishing at work.

The leaders who thrive in the AI era, who find work worth doing rather than just work that pays, are not primarily distinguished by their technical skills. They are distinguished by their sense of self beneath the job description.

For parents who lead organizations, the parallel is direct. The employees who will thrive alongside AI are the ones who know who they are, what they value, and what kind of change they want to make, before the AI tells them what to do.

Children built on that foundation can adapt to any scenario the WEF maps. Children whose identity is located in their credentials, their job function, or their performance on tasks AI can automate, cannot.

The WEF's own language is instructive: "information no longer differentiates people, agency does." Agency is not a software skill. It is a quality of character built over time through genuine struggle, real accountability, and the experience of mattering to someone.

What the Research Suggests for Parents

The following is a synthesis of evidence-based directions, not prescriptions. Different families, different children, different contexts.

Build tolerance for difficulty and friction.

Resilience starts with allowing children to face challenges and solve problems on their own. A child who can sit with a hard question without immediately reaching for an AI answer is building the capacity for judgment that will define high-value human work.

But child psychologist Dr. Becky Kennedy makes a deeper point on the Mostly Human podcast: it isn't just cognitive friction that matters: it's relational friction. "It's through those moments of friction and relationships," she argues. "You didn't get me. I wanted to tell you a story and you're not really listening... Like it's actually all those moments of friction that give us identity and purpose and resilience."

AI, almost by design, removes this friction. It listens without distraction, answers without agenda, never wants to talk about its own day. For a child, that frictionlessness feels easier — but it is precisely the messiness of human interaction, the negotiation, the misattunement and repair, that builds the self. If children increasingly turn to AI for the conversations that used to happen with parents, siblings, and friends, they may be optimizing away the very experiences that make them whole.

Prioritize physical and relational experience.

Sports, music, theater, cooking, building, and working with their hands all develop the embodied presence and interpersonal attunement that AI cannot replicate. These are not extracurriculars. They are the curriculum.

Supervise AI use, especially early.

Researchers recommend that generative AI tools should only be used under adult supervision until high school. Unsupervised access can lead to habitual dependency that stunts critical thinking and metacognitive skills.

Your own AI usage will determine how well you're able to guide your children, so it's worth investing in generative AI courses like our highly practical AI Leader Advanced program.

Have honest, ongoing conversations.

Families who talk regularly about AI have better-aligned expectations, less conflict, and more constructive relationships with technology. You don't need a formal "AI family meeting." Work it into everyday conversation.

Watch the identity foundation.

The deeper question under all the tactical guidance is: does your child know who they are beneath their grades, their achievements, and their performance?

That foundation, built through genuine struggle and genuine love, is what no disruption can reach.

Key Takeaways

- The entry-level job crisis is real and structural: With entry-level postings down 35% since 2023 and graduate unemployment above 5.7%, the traditional career ladder is being compressed. Today's children cannot count on rote work to teach them professional skills. They need agency, judgment, and identity development earlier.

- The WEF's four futures scenarios all share one requirement: Whichever path AI takes, the children who will flourish are those with widespread AI-complementary skills. Workforce readiness is not about using AI; it's about being irreplaceable in what AI cannot do.

- No single school model has solved this: Alpha School and Synthesis offer compelling proof-of-concepts, but both are expensive, private, and contested. The most important school-level shift needed is away from rewarding memorization and toward building the EPOCH capabilities: Empathy, Presence, Opinion/Judgment, Creativity, and Hope.

- The identity question is the deepest one: Beneath all skill frameworks and school models lies the question of who a child is when the job description changes. Parents who help children build a self beneath the credential are preparing them for any future.

FAQ: Parenting in the Age of AI

What does the research say about children and AI dependency?

Researchers are increasingly concerned about "intellectual deskilling," where children use AI to bypass the cognitive struggle that builds real capability.

Drawing on Jonathan Haidt's smartphone research, scholars warn that habitual AI reliance can crowd out the development of metacognition, patience, and perseverance.

The recommendation from most researchers is supervised-only AI use until high school, with explicit conversations about when and why to use it, and when to think without it.

What skills should parents focus on building for an AI-driven future?

MIT Sloan's EPOCH framework offers the most research-backed answer: Empathy, Presence, Opinion/Judgment, Creativity, and Hope. These are not "soft skills" in the dismissive sense. They are the hardest to develop and the hardest to automate.

Practically: prioritize activities with real stakes, physical complexity, and genuine human interaction. Sports, music, hands-on making, leadership roles, and service to others all build these capacities in ways that screens cannot.

Is AI really threatening my child's future career prospects?

Entry-level job postings have dropped 35% since 2023, with the sharpest declines in white-collar roles that used to train young professionals through rote work.

This doesn't mean your child won't find meaningful work. It means they can no longer follow the traditional path of doing rote tasks to build toward senior roles.

The implication: develop judgment and agency earlier. Experience outside school, real projects, entrepreneurship, and direct engagement with complex problems become more important, not less.

What is Alpha School and should I pay attention to it?

Alpha School is a private K-12 network that completes core academics via AI tutoring in two morning hours, freeing afternoons for life skills and workshops. Independent MAP Growth tests show their students in the top 1-2% nationally.

The school is expensive ($10,000 to $75,000 annually), its academic claims haven't been fully independently verified, and Stanford researchers raise legitimate concerns about selection bias.

But its core insight is significant: most of what happens in a traditional six-hour school day is not the most valuable part. Separating AI-assisted knowledge delivery from human-led character development is a design idea worth watching.

How should I talk to my child about AI and their future?

Honest and ongoing rather than anxious and occasional.

Sam Altman models one approach: frank acknowledgment that AI will be more capable in many ways, combined with clarity about what humans bring that AI cannot (judgment, genuine connection, moral responsibility).

The most useful conversations aren't about AI specifically; they're about identity, values, and what kind of change your child wants to make in the world. A child who knows the answer to those questions can figure out the rest.

What are the biggest things most schools are not doing?

Most schools still optimize for memorization and credential accumulation.

The biggest gaps are: building genuine agency and judgment (not just following instructions), developing the EPOCH capabilities that AI cannot replicate, preparing students to collaborate with AI rather than be replaced by it, and grounding students in a sense of identity and meaning that isn't dependent on a job description.

Countries like Finland provide partial models and schools like Alpha offer provocative experiments. But at scale, the transformation required of mainstream education has barely begun.

What is the "Silicon Valley Paradox" and why does it matter?

The Silicon Valley Paradox refers to the gap between what tech leaders build commercially and what they allow in their own homes. Steve Jobs raised his children without iPads. Bill Gates withheld smartphones until age 14. Peter Thiel allows 90 minutes of screen time per week.

These are the people who best understand the attention and addictive mechanics of what they've built. Their private behavior suggests a level of caution that parents everywhere might take seriously, especially as AI companions and tutors become the next frontier.